Acrobatiq is the only learning-science based creation and delivery platform that brings unprecedented scale to courseware development.

- Convert static content to interactive courses in just hours

- Build online learning experiences from scratch and enhance OER

- Turn students from passive readers to active learners

- Improve learning, knowledge retention, and persistence

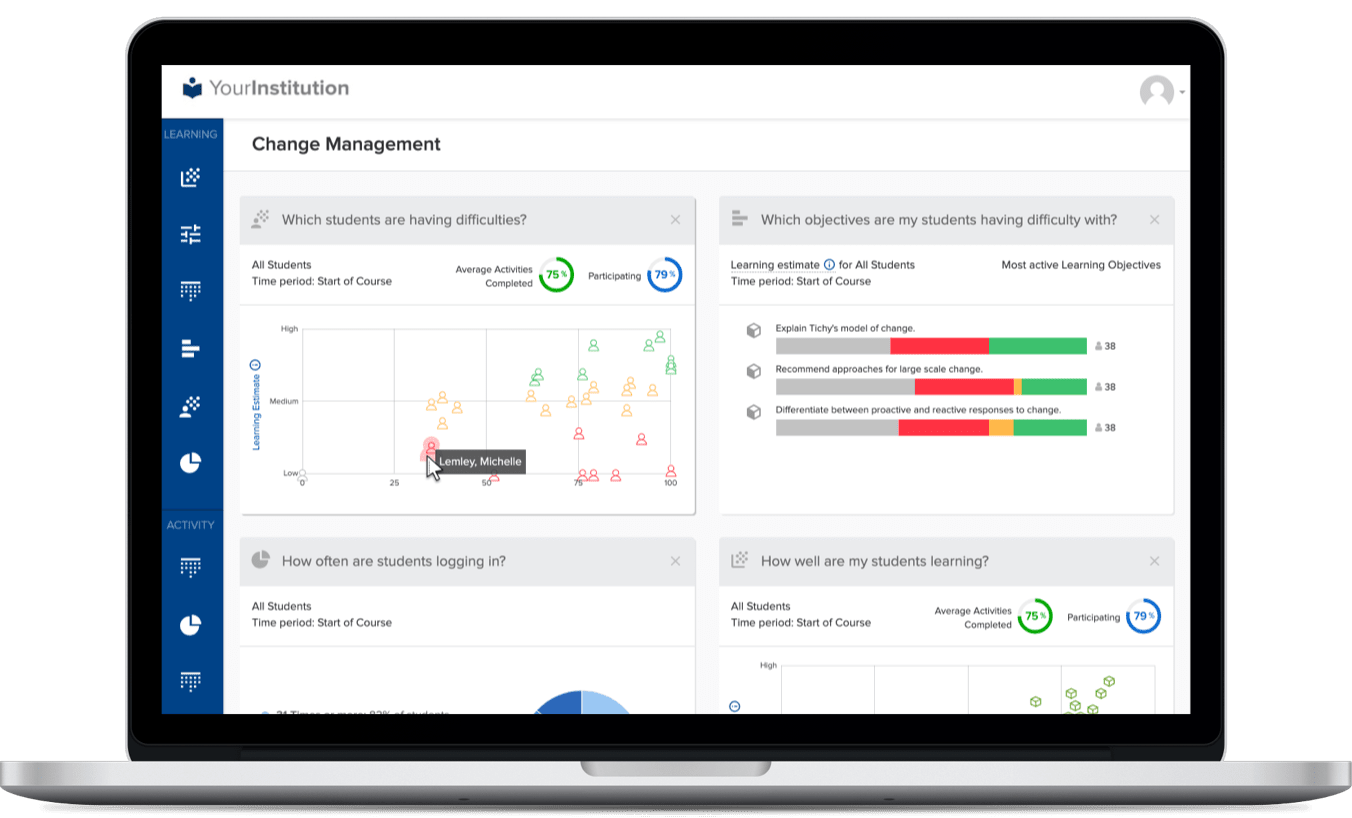

- Identify at-risk learners, spot trends, and continuously refine courses and student outcomes with Acrobatiq’s powerful data analytics

One Courseware Platform for All Your Needs

A Research-Based Approach

Acrobatiq is founded on 12 years of research at Carnegie Mellon's Open Learning Initiative, which proved strong correlations between learning activities and learning gains.

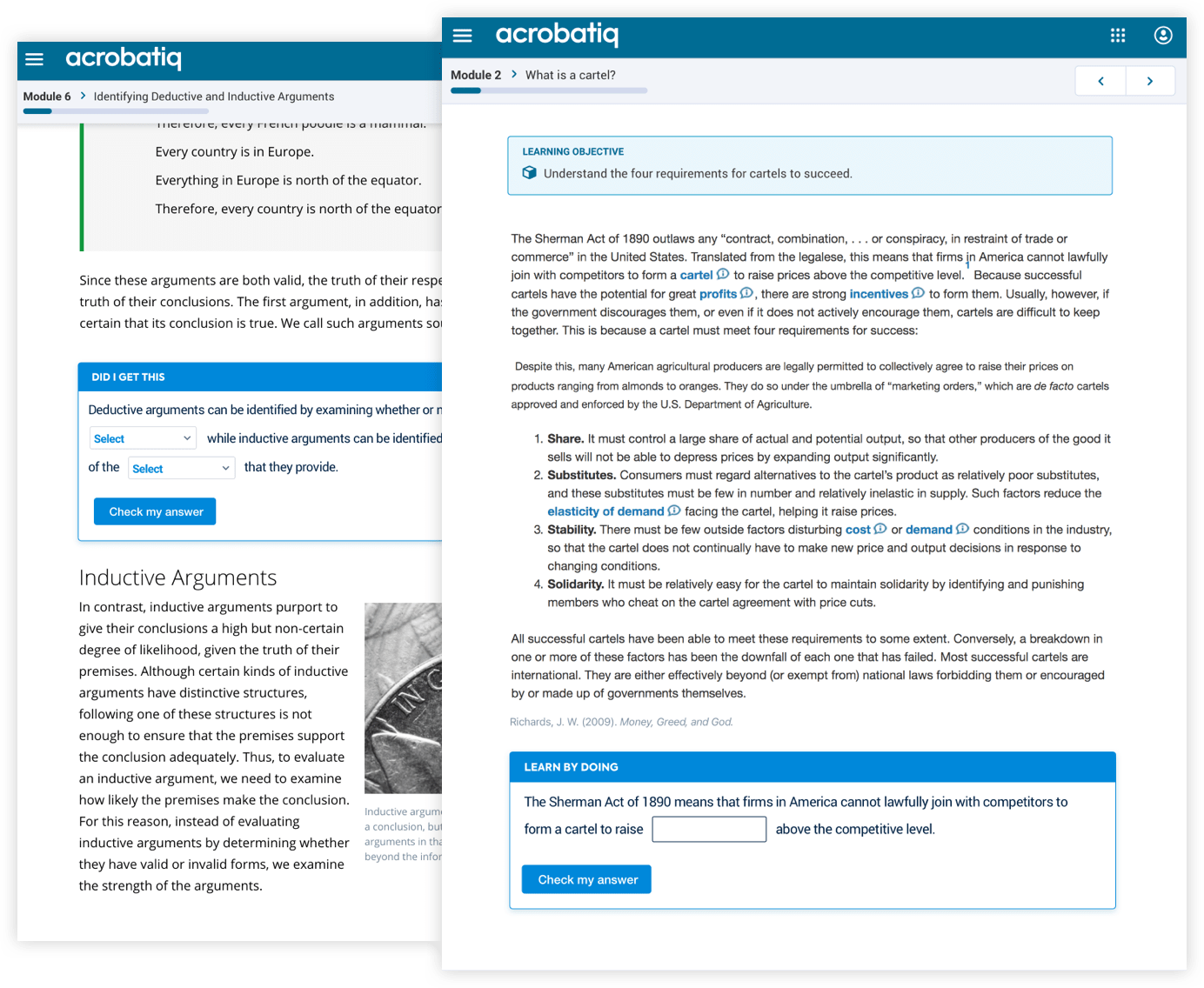

Active Learning

Our technology delivers active, personalized learning through embedded practice, which creates the Doer Effect—a proven learning design principle.

Data-Driven Design

Rich data analytics create predictive Learning Estimates for each student by learning objective—allowing instructors to measure learning, intervene, and provide targeted support.

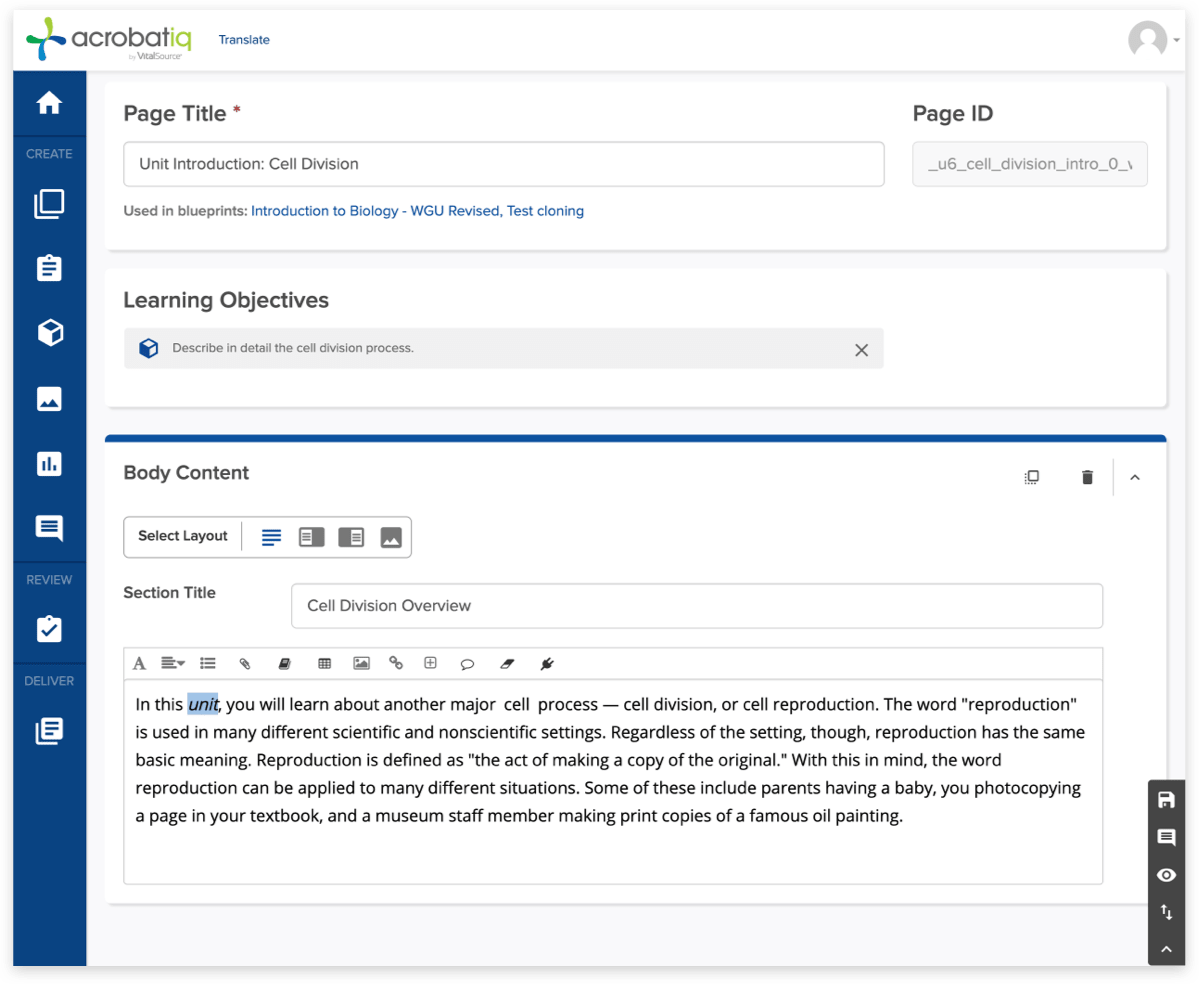

Course Development Reimagined

Our AI engine, SmartStart, uses natural language processing and machine learning to automate the conversion of static content into interactive courseware in hours. With one click, SmartStart creates topical lessons, identifies clear learning objectives, and generates embedded practice.

Create, Enhance, and Customize Your Course

- Give course design teams access to a comprehensive and cutting-edge course design canvas with SmartAuthor

- Quickly build, customize, and continuously improve courseware

- Support multiple content, assessment, and question types, providing flexibility in overall course design

- Build in adaptive practice

The Doer Effect has

6X the Learning Benefit

than just Reading